The AI Decision Layer: The Missing Piece of Legacy Modernization

In the rush to achieve "AI Transformation," many organizations are making a high-stakes architectural error: they are attempting to bolt artificial intelligence directly into the belly of their legacy monoliths. While the intent is noble (leveraging gold-standard data to drive modern insights), the execution often results in a "Frankensystem" that is both fragile and prohibitively expensive to maintain.

At the heart of our modernization guidance is a single, core thesis:

Legacy systems should remain systems of record. AI belongs in the decision layer. Forcing legacy platforms to become analytics or AI engines creates fragility and slows innovation.

This is where a thoughtful approach to legacy system modernization with AI becomes critical: introducing intelligence through secure integration patterns while keeping core systems stable. By treating AI as a dedicated "Decision Layer" or "AI Facade," organizations can protect their core stability while moving at the speed of the modern market.

The Reference Model: Enhancing the Customer Experience

Consider an E-commerce environment. Value is generated at the point of interaction, when a customer makes a purchase. To provide a sophisticated up-sell recommendation, you need:

- Current Session Data: What is in their cart right now?

- Order History: What have they bought over the last five years?

- Product & Segment Data: What products are available & what are similar customers buying?

- Click-stream Data: What did they look at but ignore?

This data is spread across different layers of the organization. Instead of forcing the E-commerce system to aggregate this, the AI Decision Layer acts as the orchestrator. It pulls the necessary fragments, runs the inference, and returns a consistent recommendation to any system that requests it.

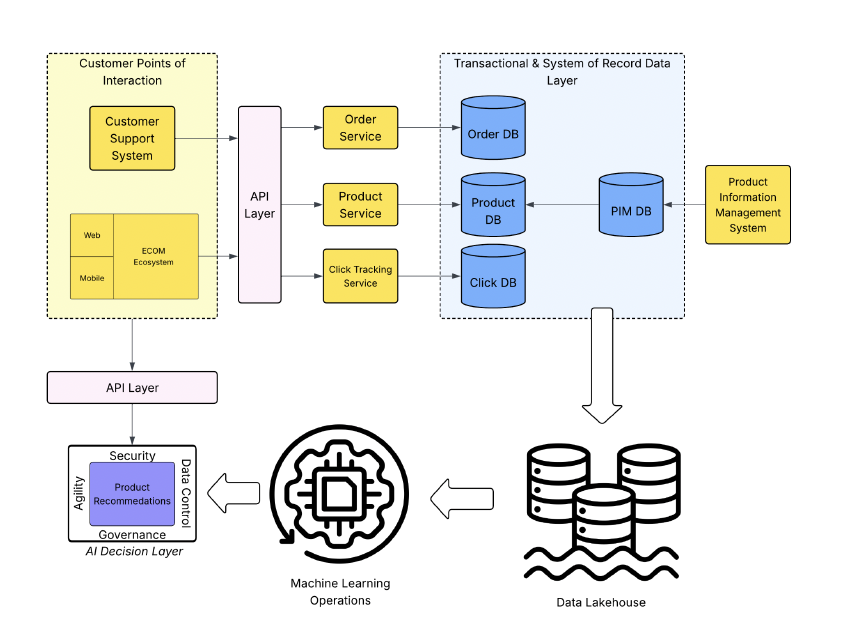

The following diagram shows how this separation works:

- The upper left portion displays the system having Customer Points of Interaction - in these types of systems, you want to add AI-driven capabilities while not building all the logic to interact with your models tightly coupled into the layer.

- As we progress to the right, the core systems are built upon a Service-based architecture, with different capabilities storing varying types of data into their dedicated data stores. Additionally, we see a Product Information Management System on the far-right side, as a Master Data Management System.

- All of these varying sets of data can be combined to create added value when it comes to building an AI model and capabilities. For the sake of our example, the AI capabilities being offered are Product Recommendations. All these data sets are fed into a Corporate Data Lakehouse, which can be leveraged in many ways throughout your organization. Importantly for this example, this layer contains mixed data that is fed into the AI Model. A modern Data Lakehouse can serve as the shared foundation for analytics, AI, and machine learning without overburdening transactional systems.

- The Machine Learning Operations is a traditional process for building and refining the model, which will eventually support the Product Recommendations.

- Lastly, and most importantly, we have the Product Recommendations Service. Our AI Decision Layer Service built to access our model following an API-driven approach, allowing systems to add the AI-driven Product Recommendation without tightly binding the model integration into the legacy system. Use cases like recommendation engines depend on more than model performance; they require the right data, architecture, and integration pattern to deliver consistent value.

- This separation means none of the existing systems required direct data importation to build out the model.

- Any system can interact with the model, leveraging an enterprise domain-driven approach, with the details of interacting with the model nested away in the diagram.

Why Direct Integration is a Trap

Building AI capabilities directly into Systems of Record (SoR) or legacy points of contact creates several critical friction points that can stall a digital transformation.

- Stability vs. Innovation: The Velocity Gap: Legacy systems are often the bedrock of an organization because they are stable. AI, by contrast, is a field of rapid iteration. When you bake AI complexity into a legacy environment, you risk the stability of your core operations. Furthermore, the dated technology stacks of legacy systems act as an anchor, constraining the speed at which you can deploy new models or update existing ones.

- The High Cost of Tight Coupling: Integrating AI models directly into customer-facing legacy systems creates a "tight coupling" problem. As models evolve, you are forced to perform surgery on the legacy code base, a process that is notoriously slow and expensive. Because these systems were not designed for these types of integration, it often requires "duct-tape" solutions that increase technical debt.

- Obscured Cost and Resource Allocation: When AI and transactional logic are intertwined, it becomes nearly impossible to accurately estimate the cost of either. Resource consumption, licensing, and maintenance hours bleed into one another, preventing leadership from seeing the true ROI of their AI initiatives.

- The Consistency Crisis: Most organizations have multiple customer touchpoints, such as mobile apps, web portals, and support centers. If your "up-sell" AI logic is built directly into the web portal's legacy backend, how do you ensure the call center agent sees the same recommendation? Fragmentation leads to inconsistent customer experiences and "prompt drift," in which rules are applied inconsistently across silos.

- Data Veracity and Model Bloat: AI thrives on data from across the entire enterprise ecosystem. It is rare for a single legacy system to contain all the data required for a high-performing model. Forcing a legacy data model to ingest and structure external feeds for the "Model Building Lifecycle" leads to massive bloat, further complicating an already delicate data structure.

The Benefits of AI-as-a-Service

Building AI capabilities as a dedicated service layer provides a "Clean Room" for innovation that sits safely outside your legacy footprint.

- A Unified Interface: You create a common business-driven API that serves the entire enterprise. Whether it’s a legacy green-screen terminal or a modern React app, they all talk to the AI in a consistent manner.

- Hardened Security Boundaries: By isolating AI, you can build a specific security perimeter around your models. This allows for granular control over who can access specific capabilities and provides a robust defense against malicious "prompt injection" or data exfiltration. (For a deeper look at threats like prompt injection and jailbreaks, see SPR’s practical guidance on securing LLM applications.)

- Freedom of Data Movement: In this model, data flows into a collective Data Lakehouse rather than a transactional database. Your AI researchers can iterate on models using data from across the enterprise without ever touching the production transactional systems. Each layer evolves at its own natural cadence.

- Isolated Auditing and Compliance: AI requires unique logging and observability (tracking hallucinations, bias, and token usage). Keeping these logs in the service layer prevents them from cluttering legacy system logs and makes compliance reporting significantly easier.

- Innovation Without Permission: The AI layer can adopt the latest frameworks, GPU-accelerated hardware, and LLM providers without being held back by legacy technical debt. The legacy system’s only job is to send a simple request and receive a JSON response. The decision layer also creates a cleaner path for productionalizing AI proof-of-concepts because teams can test, deploy, monitor, and improve models without constant changes to the legacy codebase.

With the right LLM integration pattern, organizations can adopt new models and providers without tying every change to legacy release cycles.

Modernization is not about replacing every legacy system; it’s about surrounding them with the intelligence they weren't built to have. By establishing a dedicated AI Decision Layer, you ensure that your "Systems of Record" stay reliable, while your "Systems of Engagement" become smarter, faster, and more secure.

For organizations still deciding where AI should live in the enterprise architecture, SPR’s AI consulting services help connect strategy, governance, data readiness, architecture, and delivery.