Talend Open Studio for Integration

Populating a Fact Table Using Talend Open Studio for Integration

Talend’s unified platform architecture continues to gain strong momentum in the area of Data Integration, Data Management, Data Quality and Big Data. Built on a comprehensive IDE, Talend offers greater control and flexibility as required with the ability to modify the generated Java code.

How does it work?

Fact Tables are an essential part of a data warehouse solution. Of course, the complexity of this process is largely dependent on individual business requirements. Our goal here is to share with you a simple ETL job (called FinanceFactTable) using Talend’s Data Integration tool. To follow this process, the assumption is made that the reader is familiar with the basic functionality of this software.

To get started, we have generated sample data with five dimensions using the adventure works data set.

- DimDate

- DimOrganization

- DimAccount

- DimScenario

- DimDepartment

For this purpose, these are all CDs and will create and populate the fact table (FactFinance).

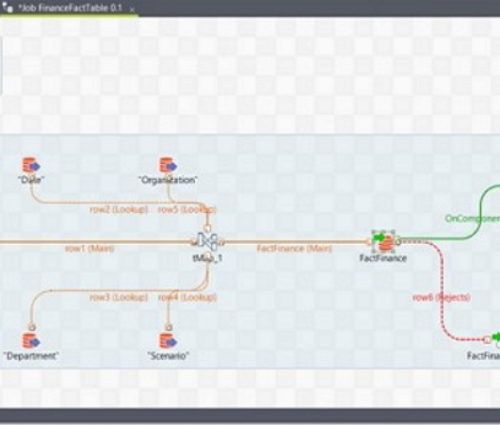

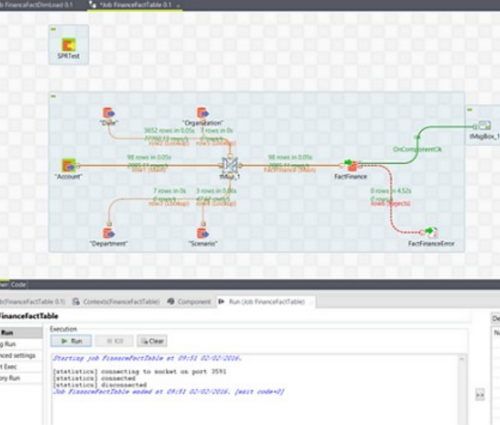

The initial step in this context is to bring in all the individual tMSSqlInput components and setup the parameters required to read the data from the source (SQL Server). Fig. 1 Below is the workspace with all components.

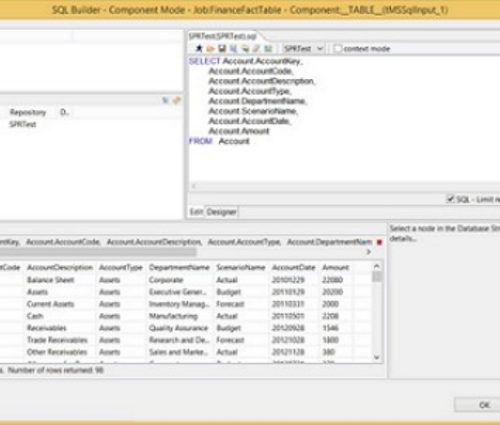

The next figure is a preview of one of the added dimensions to ascertain that we have correctly setup the parameters as required. Should there be a need to modify the query required to obtain the desired result, this is where that task can be performed. Please see Fig. 2

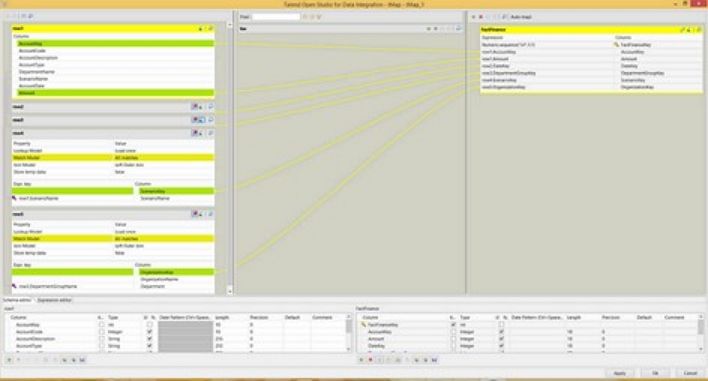

As you can already see, there are other components within the workspace above that have been purposely neglected. Please allow us to introduce you to a powerful component of Talend Data Integration, the tMap. Its primary purpose is to map input data to output data. However, in our case, we will not only use this component as an input to output connector, but also perform the required join between our dimensions. We will also add a custom parameter to it. The tMap is a powerful component of the Talend DI application and thus requires a dedicated post of its own to cover some of its features and configurations.

It is important to note that as far as joins are concerned in a Talend transformation job, one input is the primary input while the others are look-ups read in to memory. As previously alluded to, business requirements and your understanding of the data set you are working with will come into play. In our case the “DimAccount” table will be our primary table. It is the only table with the measure we care for.

Fig. 3 below shows you the tMap component of this job. You can see the join condition used to retrieve the surrogate keys, also a numeric sequence routine was added to the fact table. Depending on your requirements, the configuration of the tMap will be vital.

In the job above, two more components have also been added – the tFileOutputDelimited and tMsgBox. The role of the tFileOutputDelimited is to capture failed rows while the tMsgBox notifies us of completion.

The next step is to run the job. The figure below shows the job status and notification.

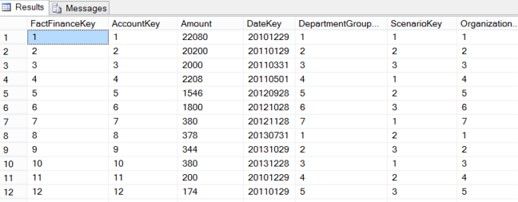

The final step is to verify the success of our job within the data warehouse. We expect a new table loaded with data. Please see figure 5.